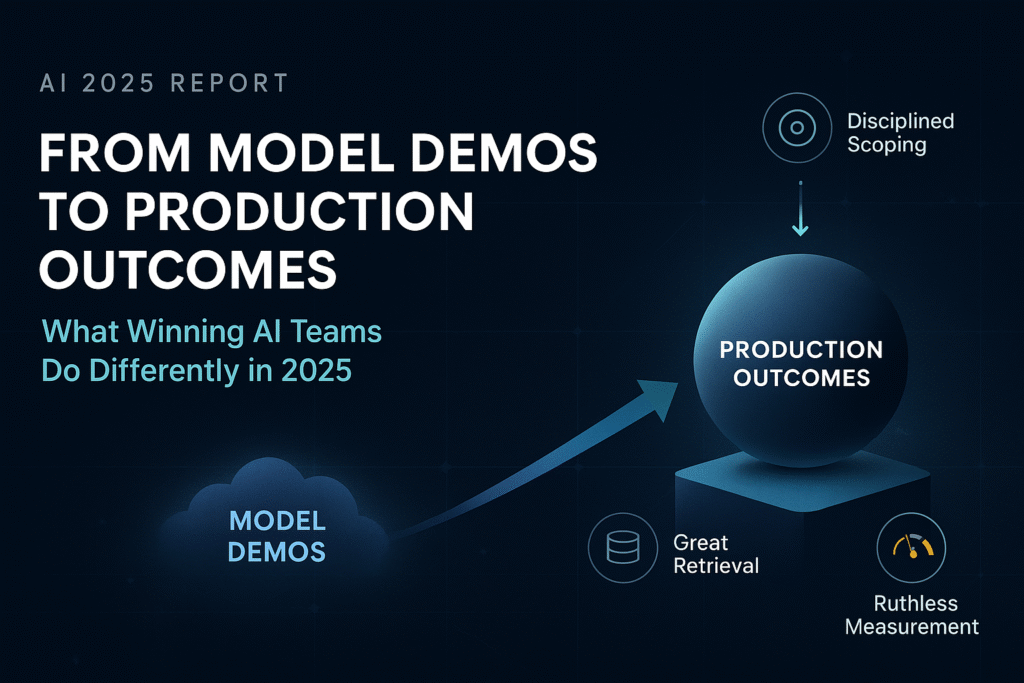

Summary: In 2025 the center of gravity moved from model demos to production outcomes. Teams winning with AI share three traits: disciplined scoping, great retrieval, and ruthless measurement. This report distills operating patterns we see across successful deployments.

The production bar has risen

Shipping an assistant is trivial; shipping one that a business relies on is not. Reliability now matters more than raw capability. The practices below are pragmatic—not fashionable—and they compound.

Pattern #1: Productize the work, not the chat

High performers define a unit of work with an owner, inputs, outputs, and an SLA. “Answer a policy question with a citation,” “Summarize a vendor contract into a 7‑field record,” “Draft a support response with a checklist.” Conversation is a UX; work is the product.

Pattern #2: Retrieval is a content supply chain

Documents are split, embedded, and versioned like code. Each chunk carries metadata—owner, source, last‑reviewed, effective dates—and a status badge that surfaces to the user. Without stewardship, RAG becomes a random‑access gamble.

Pattern #3: Cost control by design

- Routing: small model first, escalate on uncertainty or risk.

- Caching: determinize prompts where you can; cache stable answers by hash of inputs + source versions.

- Bounded context: limit tokens per task; summarize upstream; prefer tools over generative reasoning when facts exist.

Metrics that leadership accepts

Adopt a finance‑friendly scorecard: time‑to‑outcome, human‑edit rate, deflection/automation rate, error cost, and net dollar impact. Log prompts, context, model, and outputs so you can explain variance rather than argue about anecdotes.

Operating model

Pair a product manager with a platform engineer and a content owner. Weekly review: top failures, unit costs, best/worst examples, roadmap. Keep the artifact short; ship the fix.

Bottom line: The winning teams treat AI like any other critical system—observed, versioned, and aligned to business outcomes.