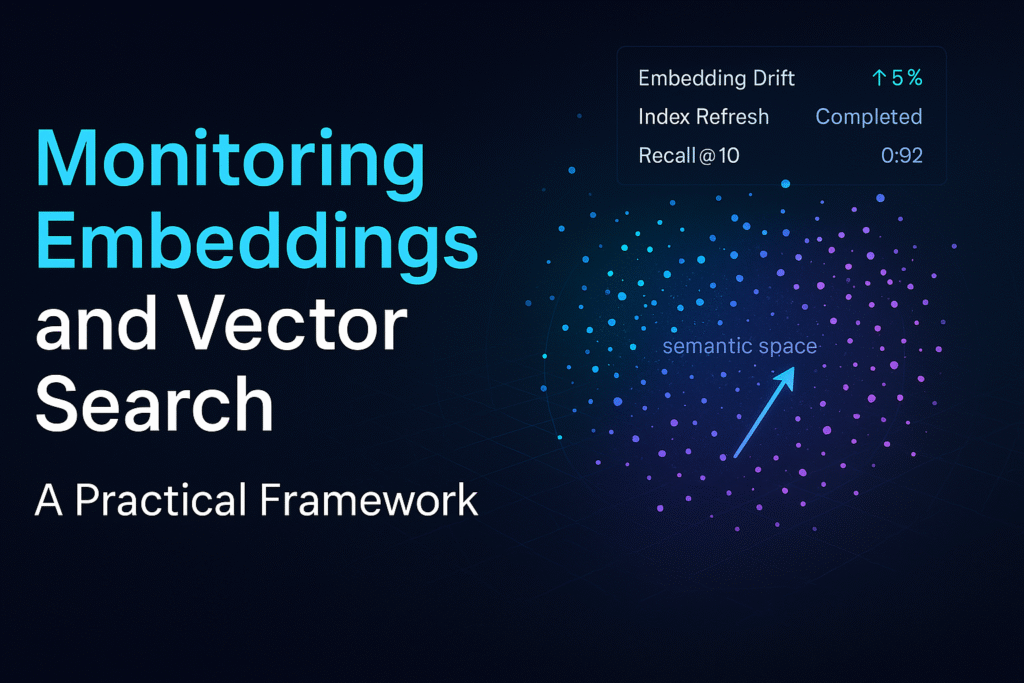

Retrieval quality degrades silently. The culprit is often embeddings and vector search, not the LLM. Here’s a monitoring strategy that catches issues before users do.

Failure modes to expect

- Empty vectors from parsing bugs or timeouts.

- Wrong dimensionality after a model upgrade.

- Drift from new content or revised chunking rules.

- Index corruption or stale HNSW graphs.

Golden queries & labels

Maintain a small, versioned set of “golden” prompts with expected passages and acceptable answers. Run them daily against staging and prod. Alert on recall/precision drops, not just accuracy.

Telemetry to capture

- Per‑query: top‑k passages, similarity scores, source_version, and elapsed time.

- Corpus: embedding completeness %, average chunk age, near‑duplicate rate.

- Index: build times, graph size, memory use, and search latency percentiles.

Rollout policy

Treat an embedding model change like a database migration. Re‑embed a sample, compare retrieval against goldens, backfill in batches, and hold out a canary index. Keep a rollback switch.

Result: You’ll separate model issues from retrieval issues and protect users from silent regressions.